I thought I would blog this, since the first attempt left me with a pretty messed up site server. I upgraded and there was no root\SMS namespace in WMI and the site was screwed.

I reverted my Hyper-V snapshot (never do this in production as this is not a supported method of recovery!) and started again. I believe the issue was because I stopped the SMS services on the site server. This is not mentioned in the MS docs Upgrade on-premises infrastructure – Configuration Manager | Microsoft Docs but I had done this on a previous upgrade from 2008 R2 five years ago as documented on the site In-place upgrade SCCM CB 1602 Site Server from Windows 2008 R2 to 2012 R2 – SCCMentor – Paul Winstanley

Start off the process by removing SCEP from the site server as it’s not supported on server 2019 and you’ll get a warning block when trying the upgrade.

To remove SCEP run the following command C:\Windows\ccmsetup\scepinstall.exe /u /s

Now, if you have WSUS running on the server, remove the role, but first deattach the SUSDB so it can be readded after upgrade.

Stop the IIS and WSUS services on the WSUS server by running the following commands in PowerShell:

Stop-Service IISADMIN

Stop-Service WsusService

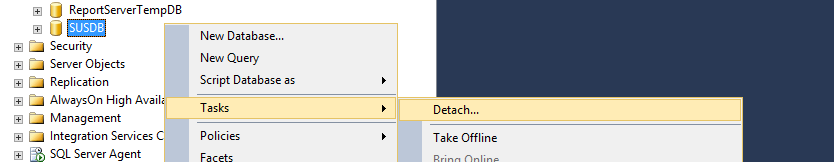

Load up SQL Management Studio and navigate to the SUSDB

Right-click the SUSDB and select Tasks\Detach.

If active connections exists then click the Drop checkbox.

At this stage, I took a copy of the SUSDB.mdf and SUSDB_log.ldf files as a precaution. Note that the DB will be removed from view now it’s dettached.

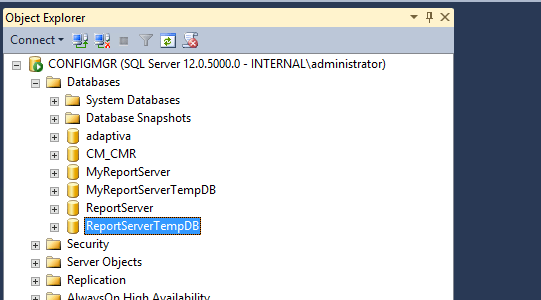

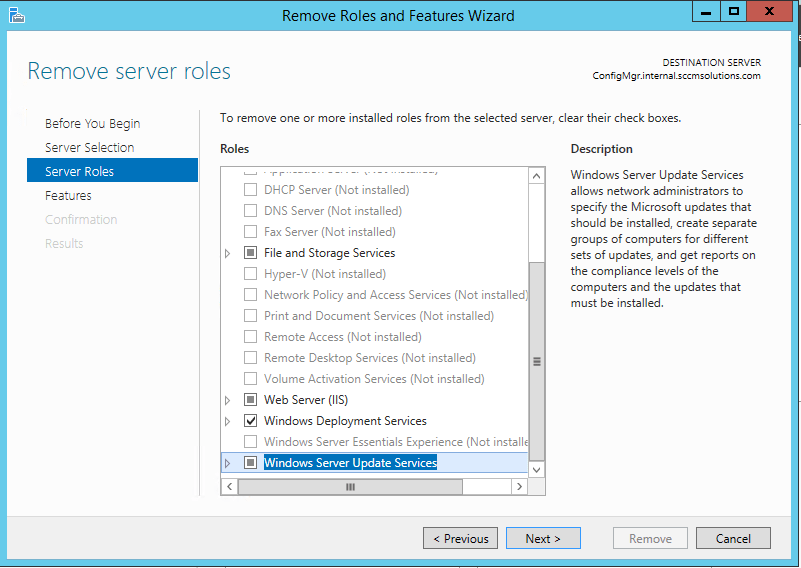

In Server Manager, click Manage and Remove Roles and Features.

Remove the Windows Server Update Service role by clicking on it

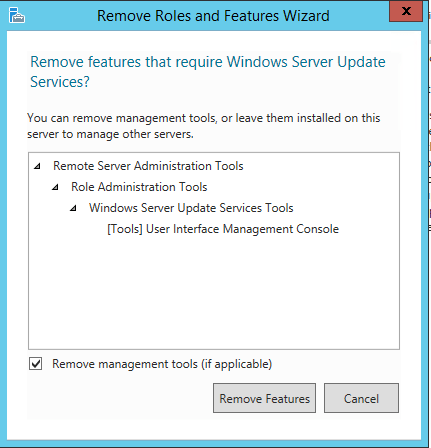

Remove the features.

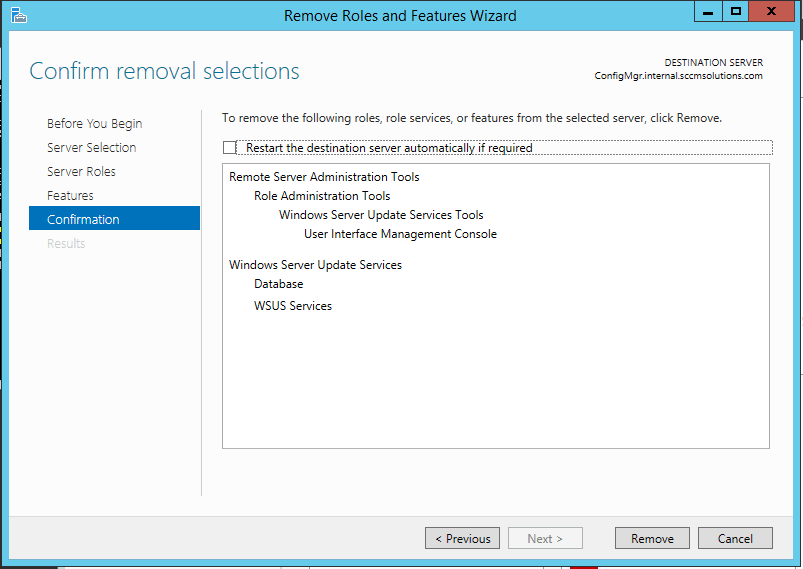

Click through the wizard and remove the role.

The docs also recommend the following for site server

- If you’re upgrading the OS of the site server, make sure file-based replication is healthy for the site. Check all inboxes for a backlog on both sending and receiving sites. If there are lots of stuck or pending replication jobs, wait until they clear out.

- On the sending site, review sender.log.

- On the receiving site, review despooler log.

*** Update – see Update 22.01.23 at the end of the blog post ***

‘Following the before upgrade steps from the MS doc install the latest cumulative updates. Then uninstall the Windows Management Framework 5.1 (KB3191564) from Installed Updates’

**********************************************************

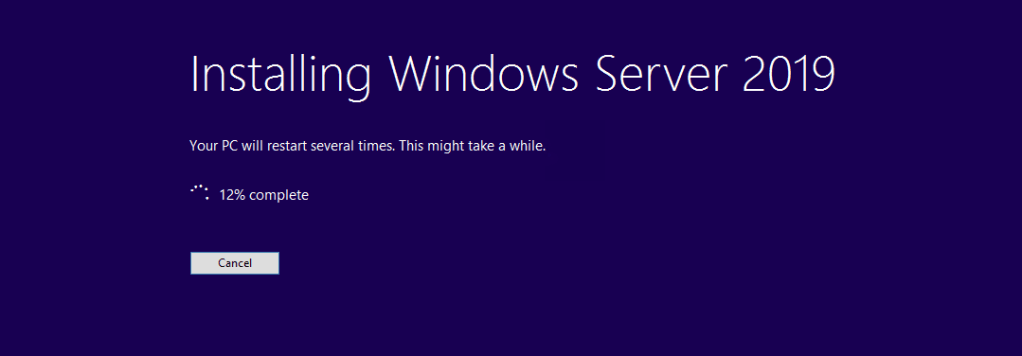

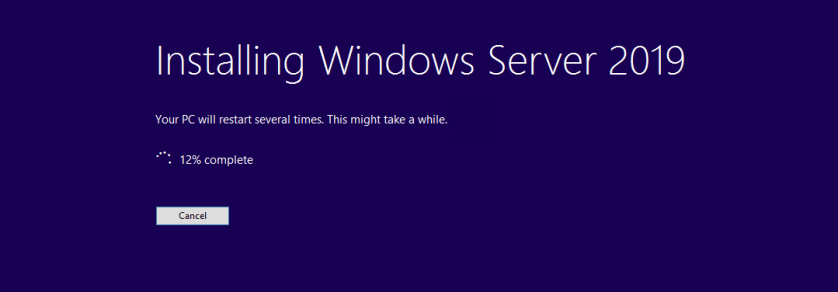

Now it’s time to upgrade the site server OS.

Mount the ISO and get started.

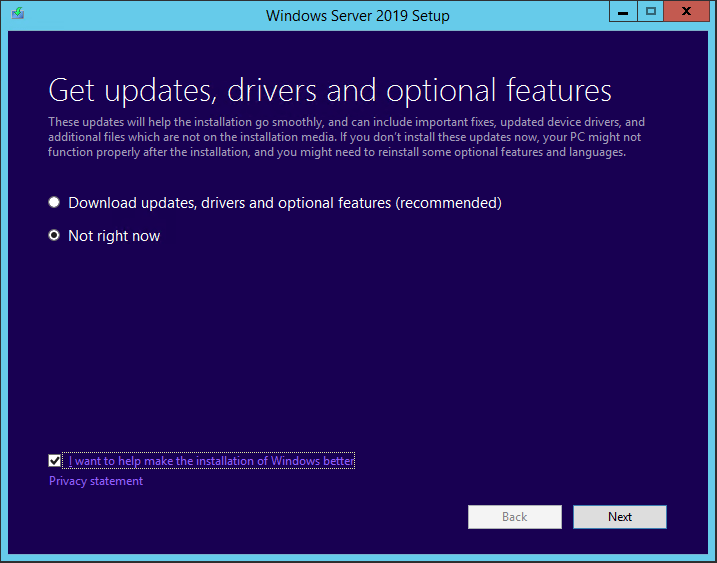

At this stage I decided I won’t apply updates. Click Next.

When prompted to enter a key, use the default KMS Client Setup Key for the OS edition you are upgrading to. I have entered the KMS Client Setup Key for Server 2019 Standard edition. Click Next. Note the keys are all listed here KMS client setup keys | Microsoft Docs

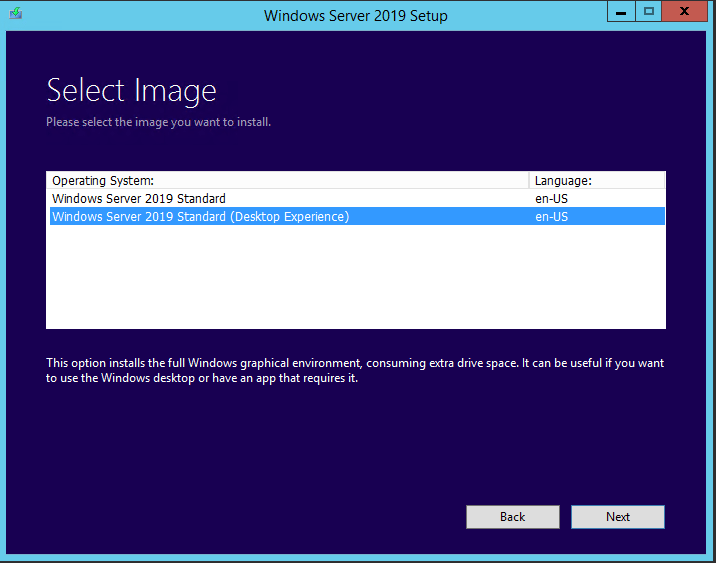

Enter the Image to install, 2019 Std (Desktop Experience) in my case, and click Next.

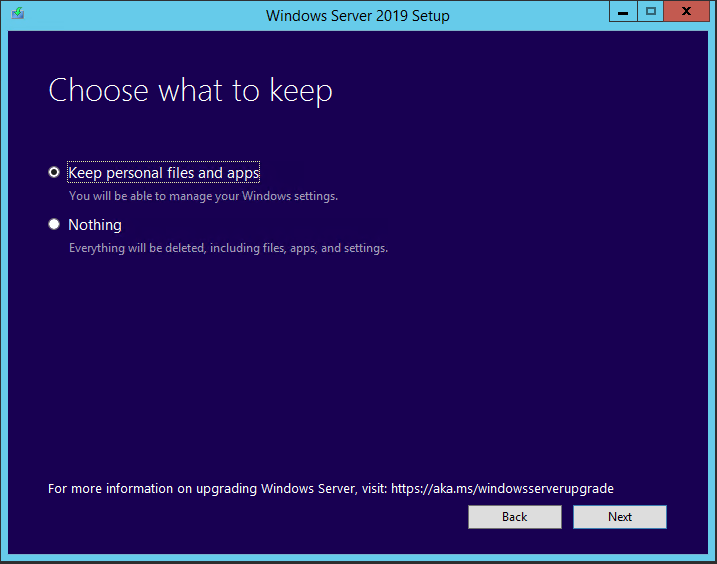

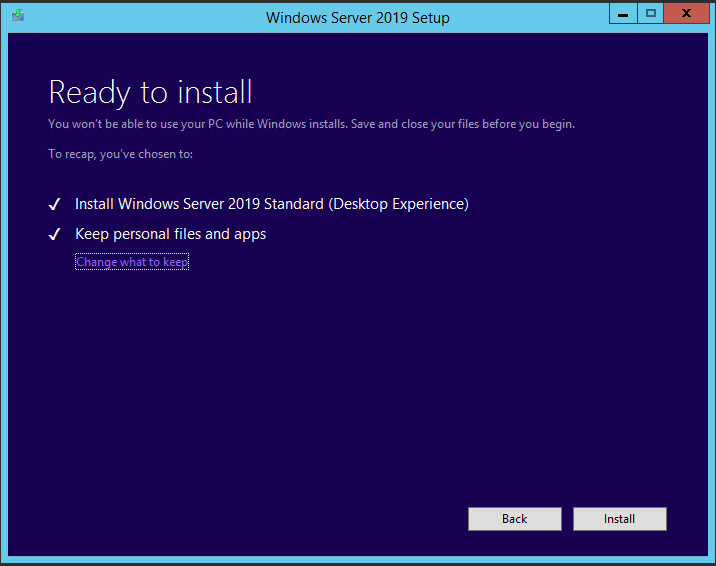

Accept the licence agreement and then you’ll be prompted with what to keep. Of course, I want to Keep personal files and apps. Click Next.

When prompted click Install.

Off goes the upgrade process.

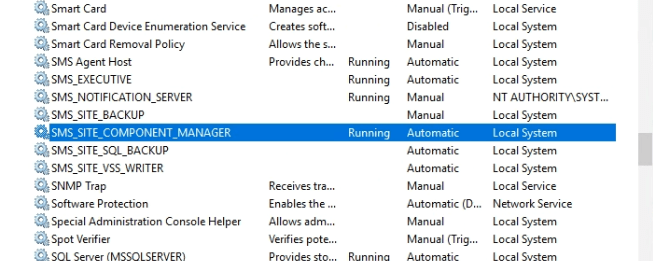

Post upgrade the root\SMS namespace was once again missing. The docs ask you to check the services SMS_EXECUTIVE & SMS_SITE_COMPONENT_MANAGER to see if they are running, which they weren’t, so I started those.

Also I am told to check the following are running:

- Windows Defender

- Windows Process Activation

- WWW/W3svc

These services were all running on my site server.

Next step is to issue a site reset. This will give the SCCM site a good old whack to reevaluate and fix services. I noted that after this upgrade the root\SMS namespace was still missing so I hoped that by doing this it would be repaired (although my previous attempt did not recover this)

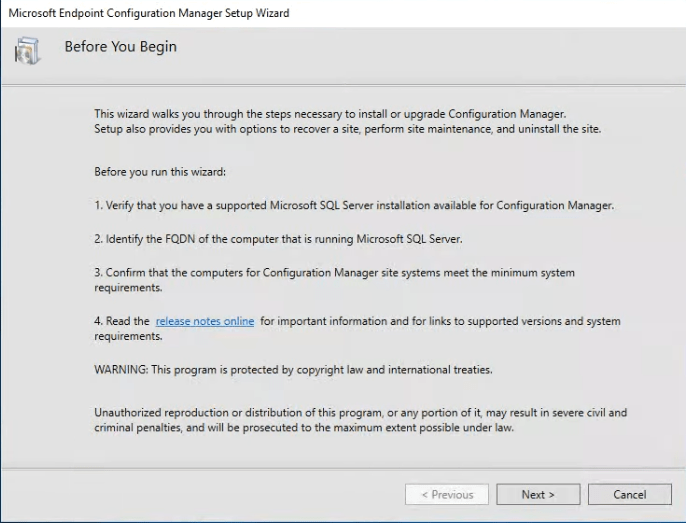

To run a site reset execute the following:

‘In the directory where Configuration Manager is installed, open \BIN\X64\setup.exe’.

Make sure you meet the pre-reqs before you do Modify infrastructure – Configuration Manager | Microsoft Docs.

Click Next.

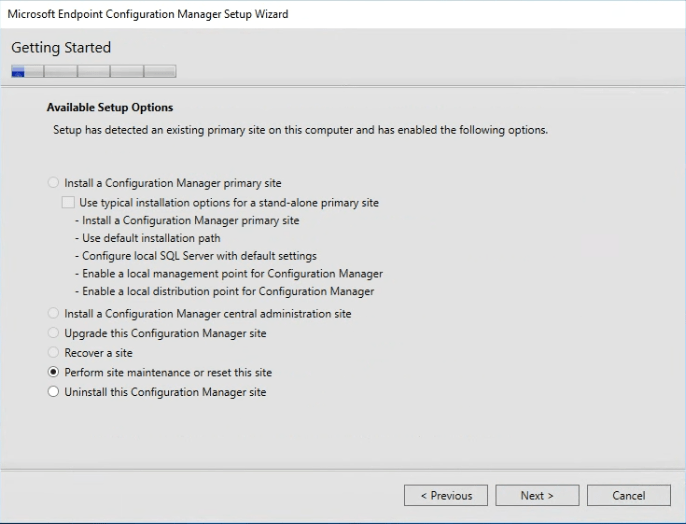

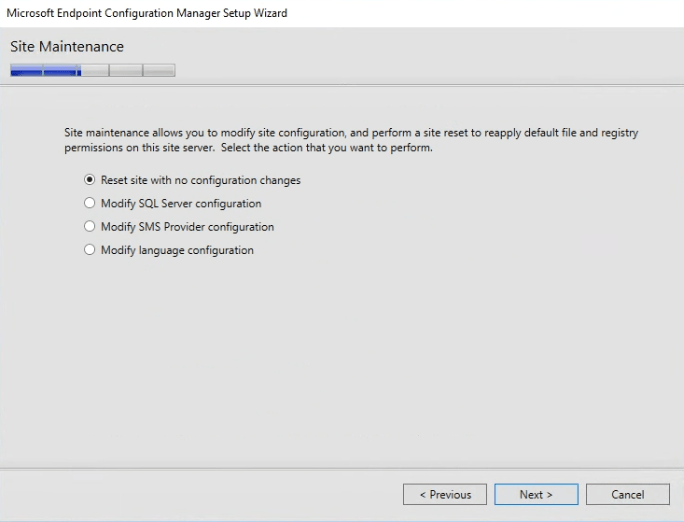

Perform site maintenance or reset the site should already be selected. If not select it and click Next.

On the Site Maintenance page, select Reset site with no configuration changes.

Confirm that you want to do this.

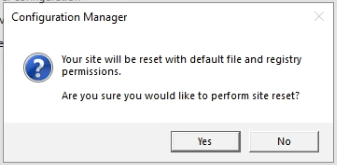

The site reset was struggling to stop the ConfigMgr service so I had to issue a taskkill to forcedly terminate that service.

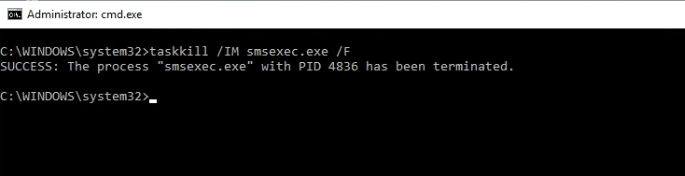

After running the site reset the WMI namespace root\SMS was back! Interesting as this did not happen the first time I tried this.

At this stage, when firing up the ConfigMgr console, there might be issues connecting to the site so the recommendation is to set the permissions on the WMI namespace Upgrade on-premises infrastructure – Configuration Manager | Microsoft Docs.

The console fired up OK for me, but I’m going to check those permissions anyway.

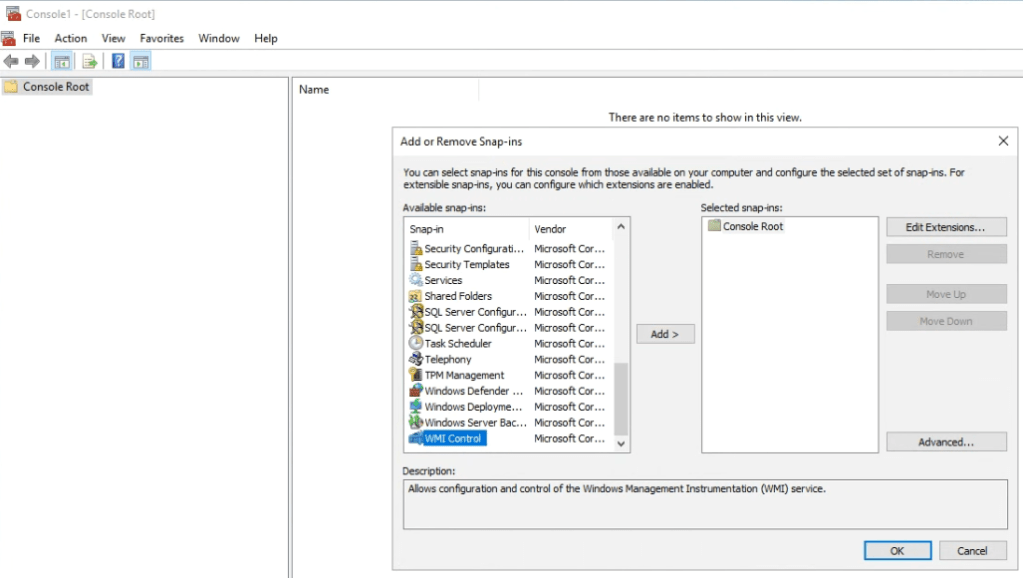

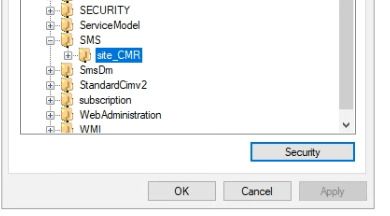

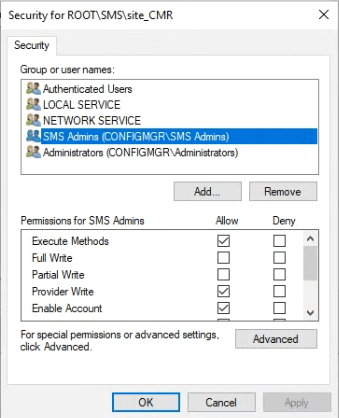

Run MMC, click File\Add/Remove Snap-in and choose the WMI Control. Click Add.

Choose Local computer and click Finish then click OK.

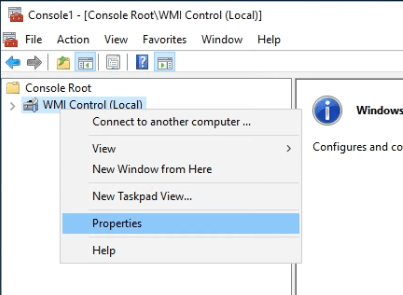

Click on WMI Control (Local) and right-click and select Properties.

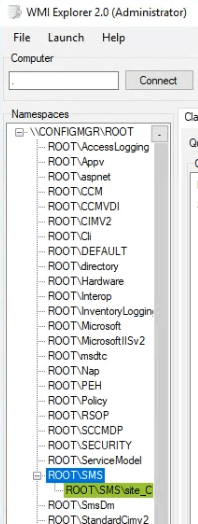

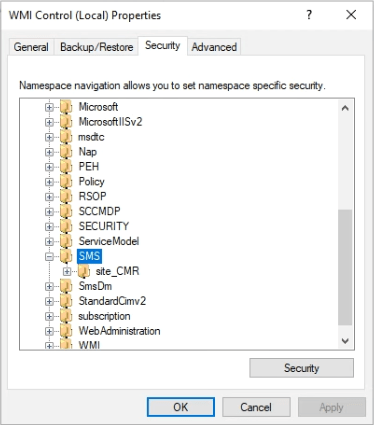

Go to the Security tab and drill down to the SMS namespace. Click Security.

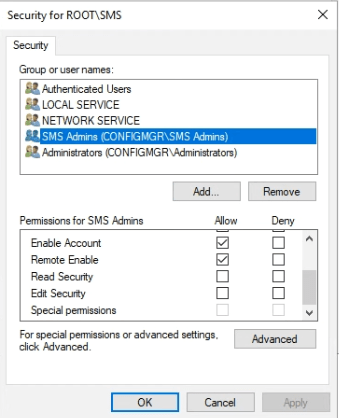

For the SMS Admins group ensure the following are ticked

- Enable Account

- Remote Enable

Now navigate to the site_<SiteCode> node and click Security.

and again, for the SMS Admins check the settings match the following:

- Execute Methods

- Provider Write

- Enable Account

- Remote Enable

So all these were OK on my site server and, as I say, the ConfigMgr console was loading up for me.

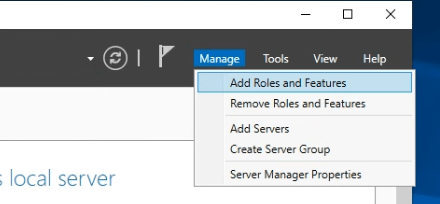

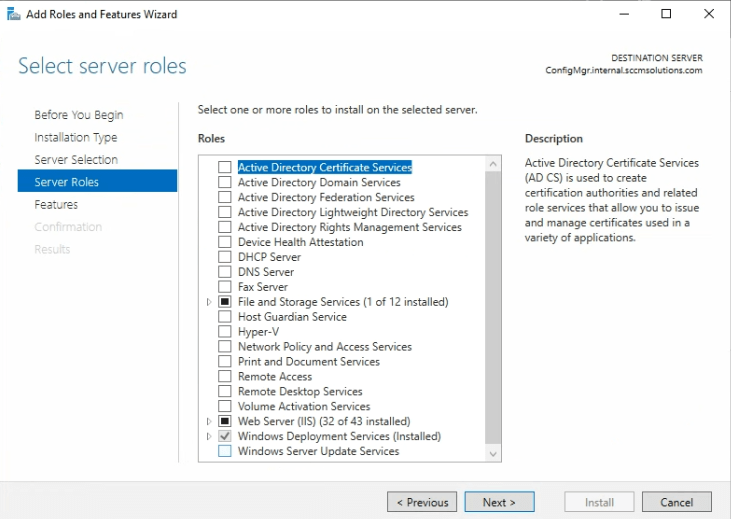

At this point, I need to reenable the WSUS role. So go back into Server Manager and click Manage\Add Roles and Features.

Click the Windows Server Update Services role.

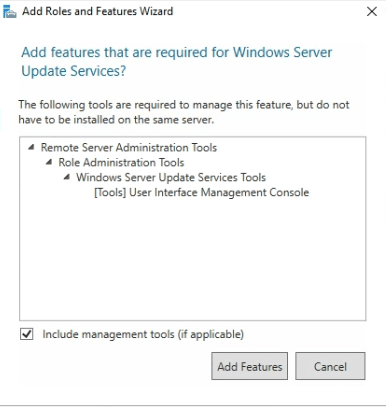

Add back the required features.

When clicking through the wizard, add in the role services which are relevant.

Enter the WSUS content path.

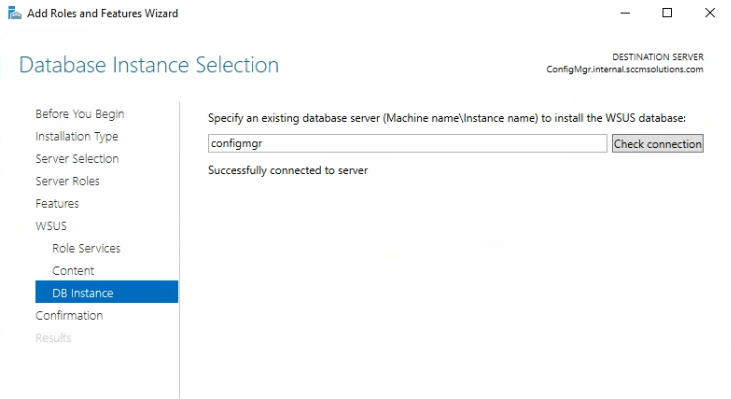

and database location.

I’ve decided, I’m going to let WSUS add in the default SUSDB during its install and then I will detach this and add back my saved DB from earlier.

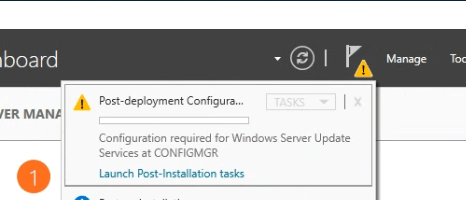

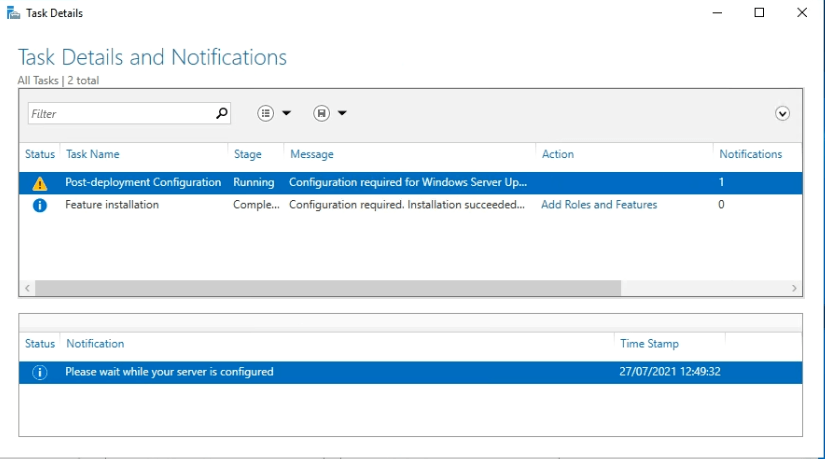

After install of the role has completed, remember to run the post installation tasks.

If these fail then check out why and resolve. Note the temp file created. This can be read in Notepad.

In my case, it was because I had copied the SUSDB files earlier but not removed them from the default location so the configuration couldn’t occur. So I deleted the files and ran the post-install config again.

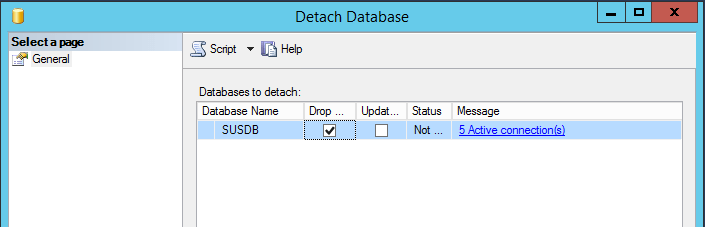

Next I needed to detach the default SUSDB using the method from previously, then remove the SUSDB.mdf and SUSDB_log.ldf files created by the WSUS role and copy back my backed up SUSDB files.

Now I need to attach the backed up DB.

Again I’m going to stop the services.

Stop-Service IISADMIN & Stop-Service WsusService in PowerShell.

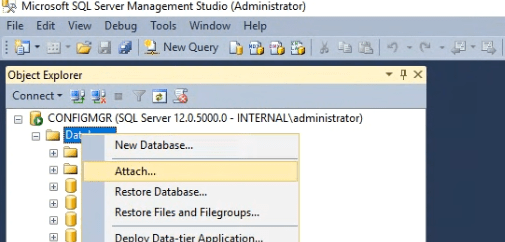

In SQL Management Studio, right click Databases and click Attach.

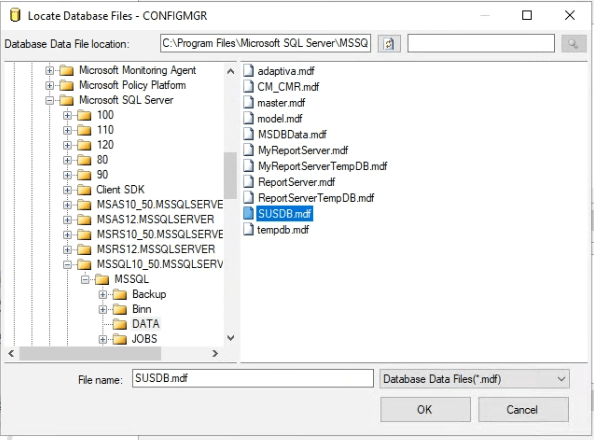

Click the Add button and browse to where the SUSDB.mdf is located. Select it and click OK.

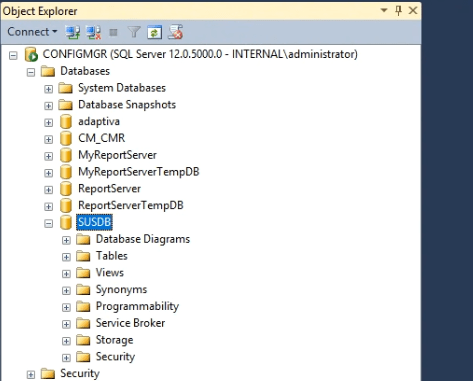

Click OK and the SUSDB will reattach.

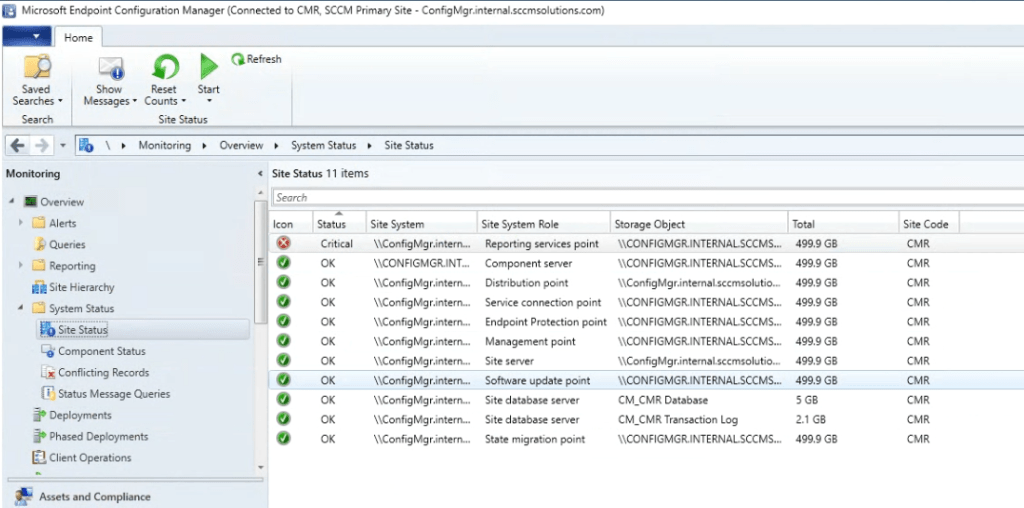

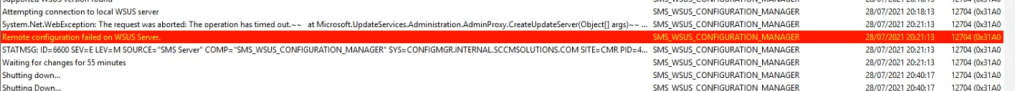

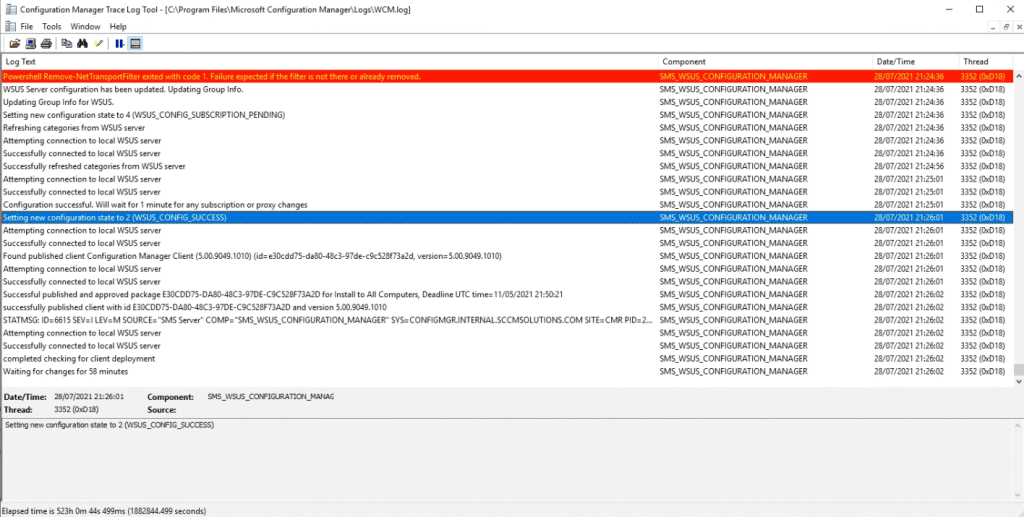

I checked the components moaning on the site. I noticed WSUS was a problem (I had SRS problems prior to upgrade so discounting these for now).

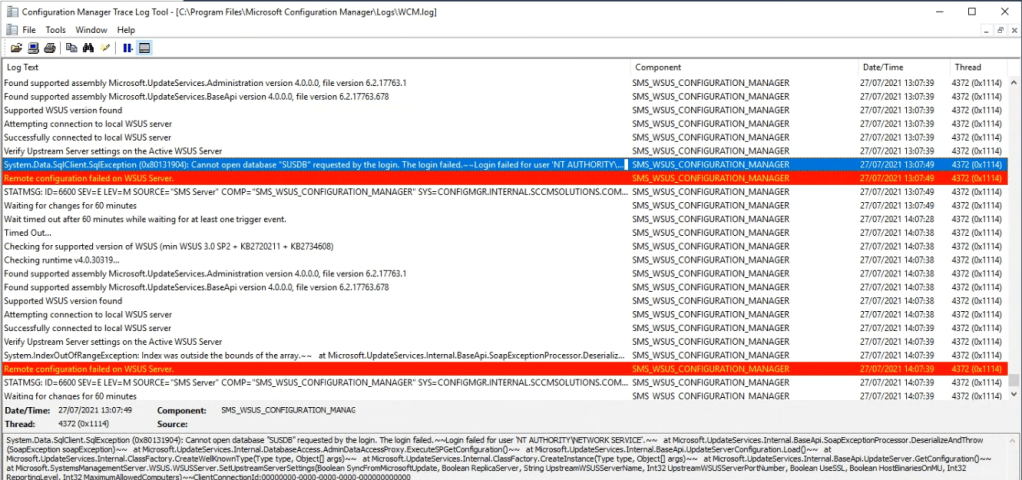

The WCM.log on the site server was complaining about accessing SUSDB, so that needs resolving.

I ran the command “C:\Program Files\Update Services\Tools\wsusutil.exe” postinstall /servicing and this completed successfully.

Shortly after things settled down and I was able to run a Software Update sync successfully and the site status came back all good for WSUS. Just that flaky reporting point – which as I said was already a problem for me.

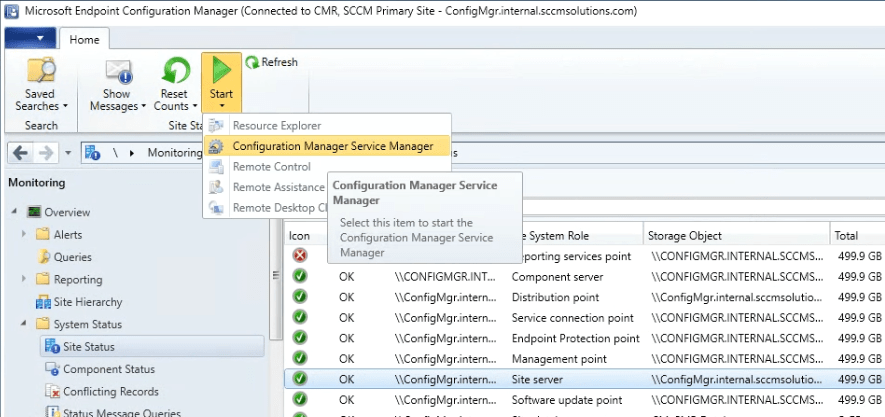

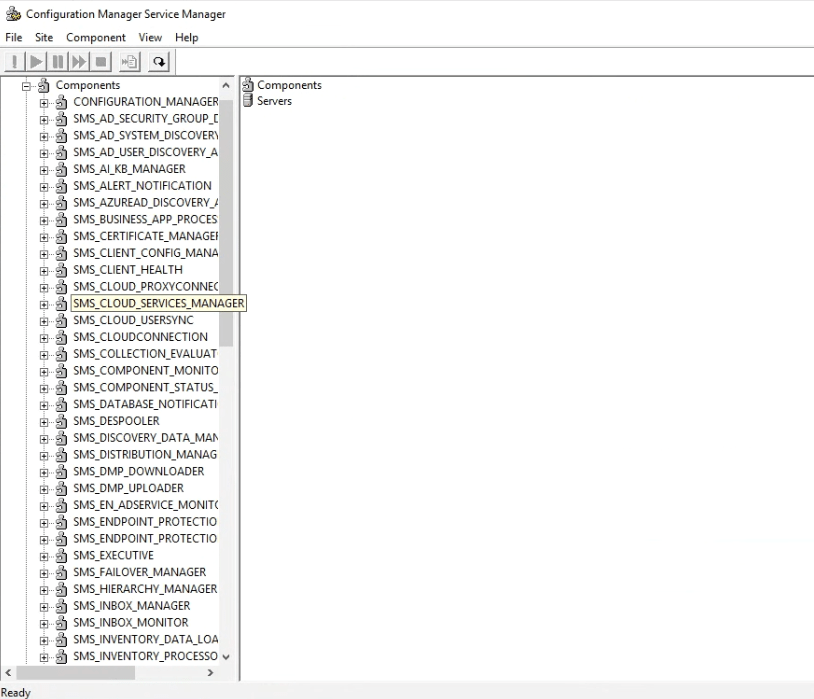

One thing I did notice was that if I ran the Configuration Manager Service Manager

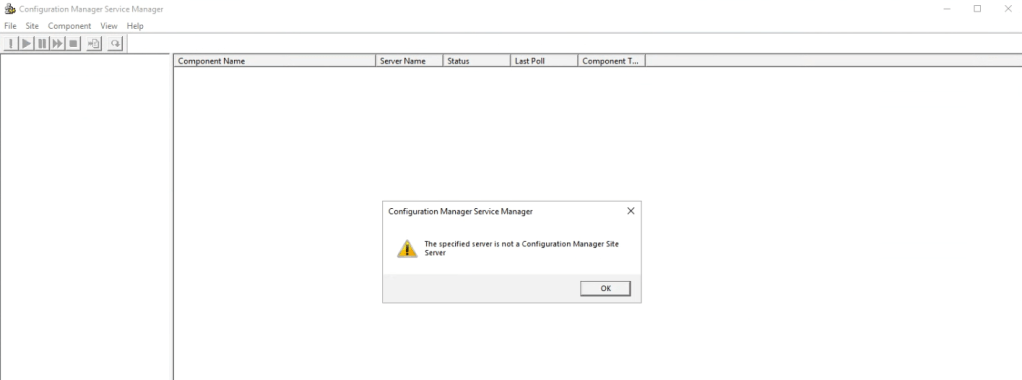

Then I was getting a This specified server is not a Configuration Manager Site Server message.

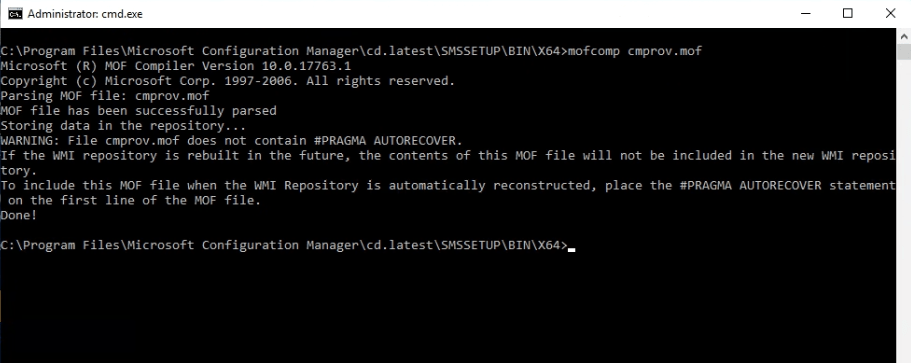

The fix here was to compile cmprov.mof under the C:\Program Files\Microsoft Configuration Manager\cd.latest\SMSSETUP\BIN\X64 folder.

So I’m up and running for now. I might encounter other issues as I start to use the upgraded server and I’ll report back to here and add in any further steps I had to take.

My next stop is to upgrade that old SQL Server 2014 install but that’s one for another day. From now I will let the dust settle.

Hope this is useful for you. As always, check the official MS docs, you may have different scenarios to consider when doing this upgrade. Upgrade on-premises infrastructure – Configuration Manager | Microsoft Docs

Updates

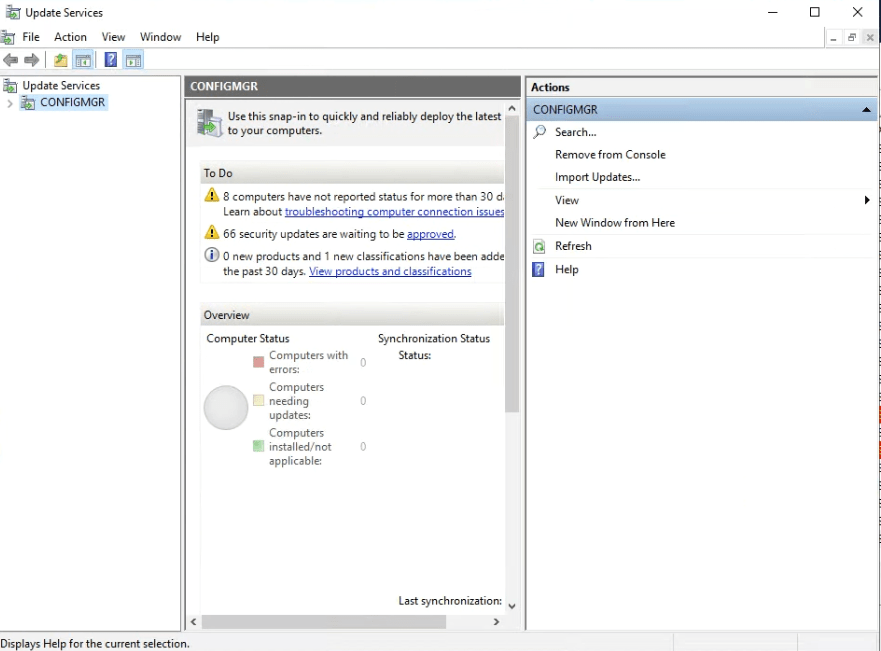

So I noticed that the WSUS was moaning again. I also noticed if I tried to fire up the WSUS console it just sat there trying to load the snap-in.

I decided to remove the WSUS role, re-add and then run the post-install process. This time it succeed without moaning about SUSDB.

I could load the WSUS console…

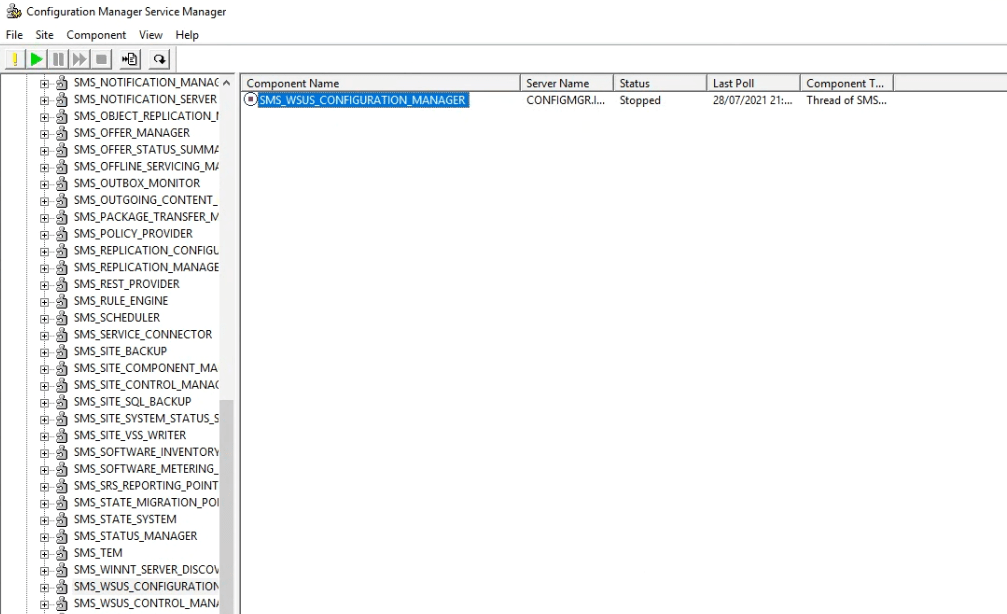

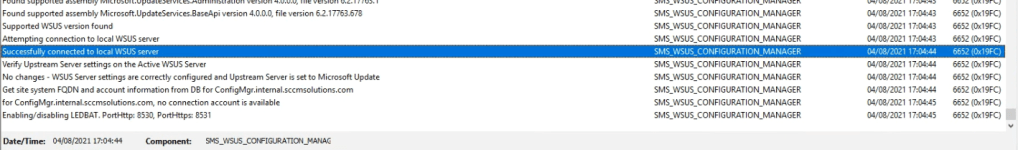

I stopped the SMS_WSUS_CONFIGURATION_MANAGER component in the Service Manager.

Kicked it into life

and WCM.log reported back a bit healthier, with successful connection to the WSUS server.

I’ll let the dust settle on this again to see if anything else flags up any impact due to the upgrade and update the blog with any fixes.

Update 2

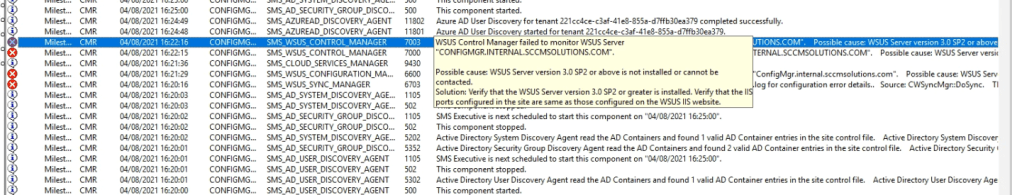

Seems I wasn’t out of the woods completely, I noticed a day or so later that the WSUS and MP were both moaning. It’s taken me a few days to get the time to take a look but here goes.

WSUS was moaning about a possible IIS configuration issue. If IIS is an issue, then this could also explain my MP reporting problems as well.

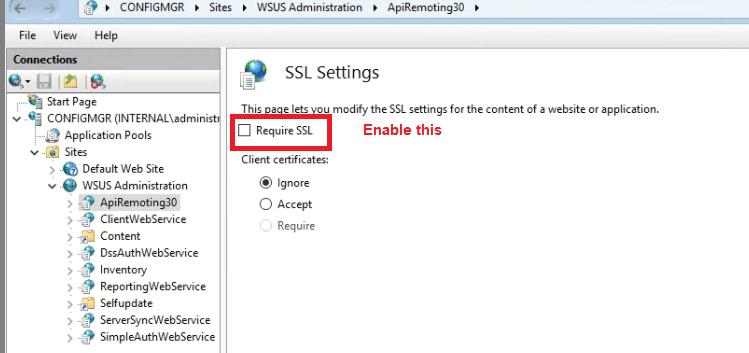

I had a quick look at the WSUS configuration. I’m running in HTTPS mode with internal PKI. I noticed that the SSL Settings had reverted post upgrade.

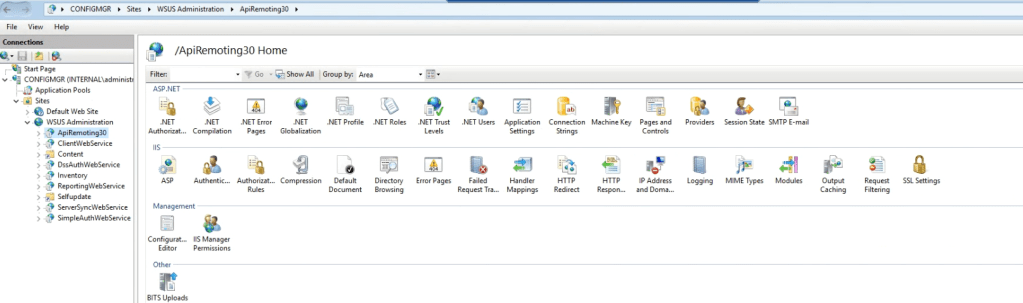

If you go into the WSUS Admin in IIS, and check the APIRemoting30, ClientWebService, DSSAuthWebService, ServerSyncWebService, and SimpleAuthWebService virtual directories. Each of those needs the SSL Settings set to Require SSL.

Checking each one, none of them were. So I enabled the check box on each and applied the setting.

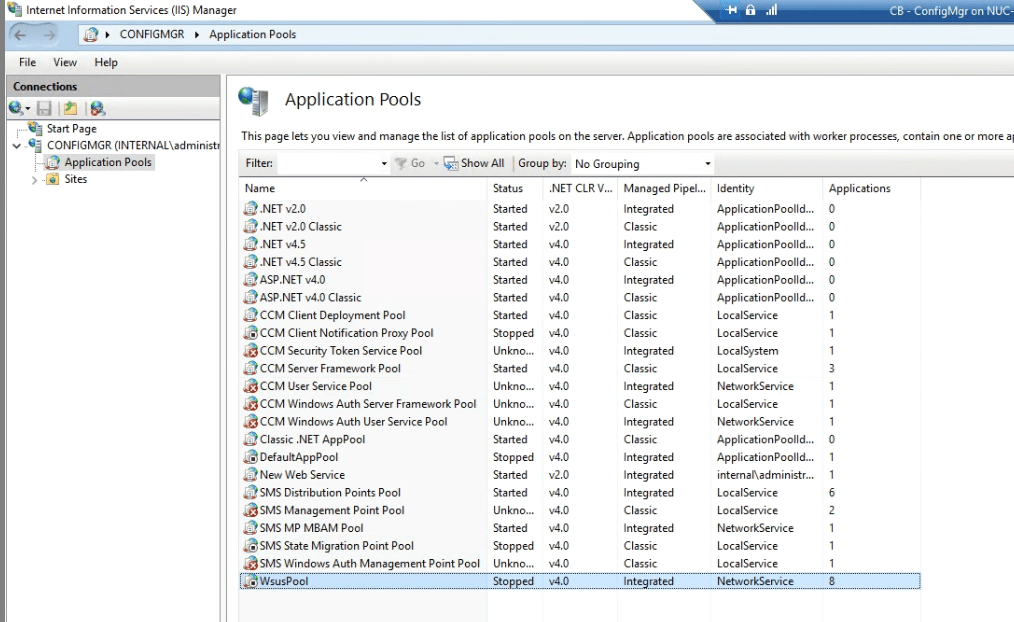

After enabling I checked the WCM log on the site server, This gave me a sea of red every hour. 503 error. OK, I’ve been here before with other ConfigMgr sites. It’s more than likely a WSUS AppPool issue.

Sure enough the app pool for WSUS was in a stopped state.

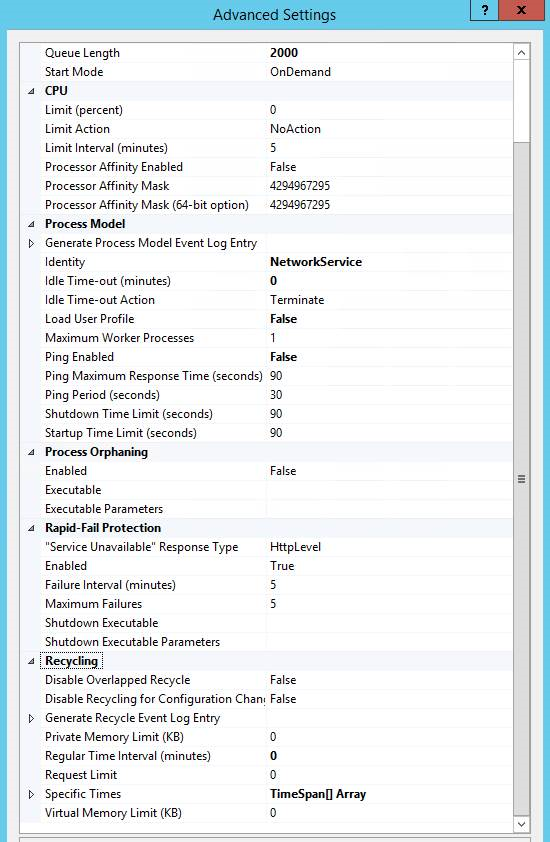

Rather than just start this, and potentially see it keel over again, I decided to follow the MS guidance on unresponsive app pool and add in the settings advised here.

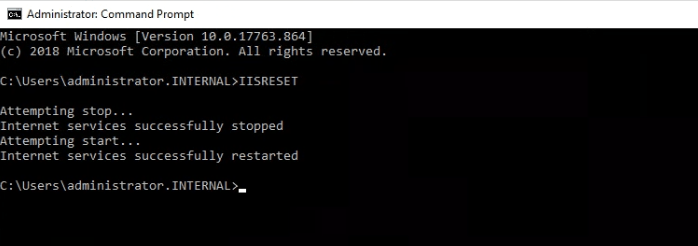

I restarted IIS and the SMS Exec services to speed things up and to see if the problem was resolved.

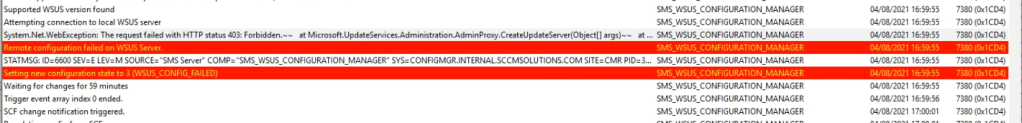

Taking a look at the WCM log file, the error had gone only to be replaced by another. Now a 403 error.

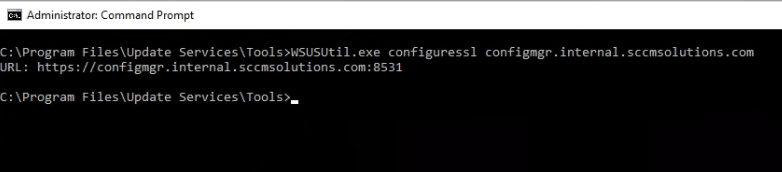

This was my bad, because after enabling the SSL setting in WSUS, I hadn’t run the following command:

Program Files\Update Services\Tools\WSUSUtil.exe configuressl <Intranet fully qualified domain name (FQDN) of the software update point site system)>

After running this, it was a case of re-running the IISRESET command.

Things then looked a little healthier in the WCM log file

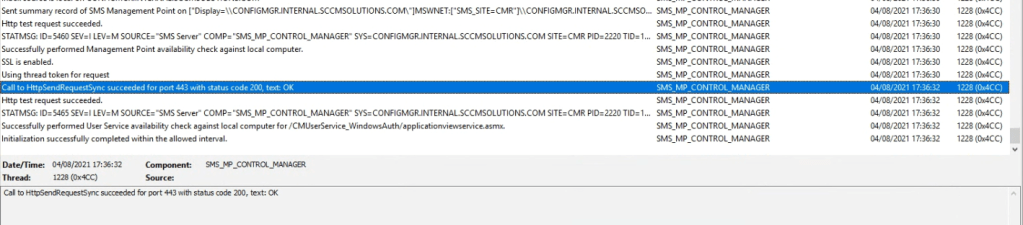

The MP should kick back into life now with the IIS changes in place. Indeed the MPControl.log reports successful.

Update 3

I recently upgraded a site server OS for a customer who had MDT integration enabled. We soon discovered that we were unable to read task sequences which had MDT components in them. Simple fix here. Remove the MDT integration from the site and add back in.

If you need to know how to do this refer to my blog on MDT integration here and follow from the section Integrate MDT with ConfigMgr.

Update – 22.01.23

I was contacted on Twitter by Zach Sattler, who had used the blog on an upgrade at his company’s site. He had the following feedback on his upgrade.

- ‘Following the before upgrade steps from the MS doc I installed the latest cumulative updates. I then uninstalled the Windows Management Framework 5.1 (KB3191564) from Installed Updates. Would be good to mention this step before starting the in-place upgrade as it can cause issues with WMI. This could potentially have been what caused the issues you mentioned at the start.’ This has been added into the blog post in the appropriate step.

- ‘When attempting to remove the WSUS role I encountered the following issue:

I don’t know if this was caused by the removal of WMF 5.1 or what was going on. I found a couple of options online (https://enterinit.com/failed-to-open-the-runspace-pool-the-server-manager-winrm-plug-in-might-be-corrupted-or-missing/) but neither of them resolved the issue. Ultimately, I opened PowerShell as Admin and ran ‘Uninstall-WindowsFeature -Name UpdateServices’ and that worked as expected. I still am unsure why the GUI was failing.

- After the OS upgrade, in addition to the services listed in the after upgrade steps from the MS doc, I discovered that my SQL services (MSSQLSERVER/SQLSERVERAGENT) were not running. They were already set to automatic. I started them manually and they started as expected, but this prevented my Site Reset from continuing. As soon as the SQL services were running the Site Rest finished as expected.

- I ran into a few of the other gotchas you already mentioned (WMI Namespace permissions / WSUS post install tasks / wsusutil.exe), and then finally I was still having an issue with the Management Point role failing to reinstall (mpMSI.log). After some searching online I found this blog (https://blog.thomasmarcussen.com/mp-control-manager-installation-fails/) suggesting removing BITS and adding it back again and that worked. After the reinstallation of the role and restarting the SMS_SITE_COMPONENT_MANAGER component in ConfigMgr Service Manager the installation of the MP succeeded.

Good write up. Always useful to know the fixes for these errors for future reference. BTW, I upgraded my lab to SQL 2019 from SQL 2014 about a year and a half ago (at least, and technically I think, before it was fully supported for ConfigMgr) and saw no issues at all so you should have an easier ride with that one!

Cheers Simon. Yes the SQL upgrade is usually a bit smoother for sure. Thanks Paul

Wow great troubleshooting, all this makes me nervous upgrading.

Probably my messy lab John tbh. Lol

I ran into issues with pretty much everything you mentioned here, plus not being able to upgrade directly from 2012r2 to 2019. After several troubleshooting attempts, I had to go to 2016 first, then up to 2019. Never did figure out why, but it was nearly 2 years ago when 2019 first came out.

Great blog, I like your style of noting not just the final process, but the thinking behind each step in the Updates sections.

Thanks for the comment. Cheers Paul

C:\Program Files\Microsoft Configuration Manager\bin\X64>mofcomp smsprov.mof returns wmi root\sms value

Vitalii, thank you very much. This worked for me.

This helped me as well! The Configuration Manager – Site Reset did not return the root/SMS at all for me.

Thank you! I needed to do that

Thanks very much. I needed to do that, too.

Amazing article! Upgraded a server from 2012 R2 to 2019 today. Went really smooth. Thank you very much for this great post!

Great stuff Ooof! Glad it helped. Paul

HI, just for clarification, the sentence — Now, if you have WSUS running on the server, remove the role, but first deattach the SUSDB so it can be readded after upgrade — is this mandatory if the SUSDB is located on the same server than wsus servicies ?? or is it mandatory if the susdb is located on a separated MSSQL server too ??

If I’m reading you correctly, Francisco, then I believe only when located on the same server as wsus services. Cheers Paul

Oddly, SMS Admins was missing from the WMI security properties, so I added the group. Console could not connect!

Below action will also help to re-enable / re-appear SMS WMI namespace:

SMSProv.log was not updating.

We opened a cmd prompt as an admin and ran below command to see if it’s running,

Tasklist.exe -M smsprov.dll

it was not running, so we ran below command from SMSProvider install location (SMS/bin/x64) one after another, Which reregister the dlls and recomplie the mof files.

regsvr32 /s extnprov.dll

regsvr32 /s smsprov.dll

mofcomp smsprov.mof

We ran Tasklist.exe -M smsprov.dll and it started running again,

We were able to open the console successfully after that.

Excellent info thanks Vishal!

Excellent guide – followed very successfully! My SMS namespace disappeared but came back following the Site Reset – although this wasn’t immediate, it took some time after the reset came back saying it was complete, and an extra restart. I found I did need to add Software\Microsoft\SMS back in to HKLM\SYSTEM\CurrentControlSet\Control\SecurePipeServers\Winreg\AllowedPaths on my Site server as my MP wasn’t functioning fully – it was showing Critical with 0kb space. It also needed an extra restart. My PXE server seemed not to be responding either and also needed a restart (not sure if actually related or just the usual PXE rubbish!)

Thanks for the info BOB. Good to know how others have tackled the upgrade. Cheers Paul